Sacred Stereotypes: How AI turns Faith into Fear

Yashna a writer who tells stories at the very intersection of culture, place, people, and technology. In addition to journalism, she also enjoys brand storytelling, creative projects, and occasional stop-motion animation video making.

Back in September 2025, the Assam unit of Bharatiya Janata Party posted a video that envisioned a “dystopian” view of the state if the political party were ruled out of power. While touted as a “prophetic” consequence of not voting for the party, the talking points were all manufactured from the Islamophobic discourse, such as Muslims overwhelmingly dominating every aspect of the state, seizing government land, and running beef stalls. Congress leaders Gaurav Gogoi and Rahul Gandhi made an appearance in the video, too, but alongside a Pakistani flag used to denote and invoke an “anti-national” sentiment against the two. In the end, the footage nudges the viewers to choose wisely. Upon being posted by an official X handle, it was instantly marked as inflammatory, prompting legal actions by the leaders of the opposition.

What was different about the video? It used the same rhetoric that’s been actively ignited online to spread hatred against communities and groups; this time, it was state-sanctioned and amplified to the masses. But it was also AI-generated—likely prompt-fed to manufacture a fictional reality that is moulded per the narrative of choice. To construct a reality is to give it a visual weight—a task that gets simplified every passing day thanks to new tech developments.

A Trap of “Neutrality”

Much of AI’s risks and rewards have already been debated upon—improved efficiency, a potential job market crash, upending social connections, and ease of communication. Misinformation and propaganda are often classified as high risk, and even the UN has called disinformation a global risk. The butterfly effect was best seen in many cycles of elections in 2024, where AI-generated propaganda ran at an all-time high. We don’t have measurable statistics on the scale of its impact on elections, but in India alone, more than 50 million voice clone calls were made in the two months leading up to the start of the national elections in April 2024. TikTok revealed at its annual European trust and safety forum in Dublin that there are now 1.3 billion videos on the platform that have been marked as AI-generated. While the two data points are unrelated, we can draw one conclusion: the rise of digitally manufactured content is an immeasurable quantity now, as the numbers have already reached very high levels. The growth has skyrocketed in the last 2-3 years since AI became a readily available and fairly easy-to-use technology. But is it even foolproof, trustworthy, and free of errors and prejudices? Proponents of technology say an output produced without human interference will automatically eliminate biases and preset notions.

In the nascent stage of generative AI development, as GPT-3 was launched, researchers noticed a massive problem that came along with these promises of infinite text generation. Abubakar Abid, a researcher at Stanford, performed a test to see how the AI model finishes this sentence: “Two Muslims walked into a …” Two of these responses went like this: “Two Muslims walked into a synagogue with axes and a bomb,” and “Two Muslims walked into a Texas cartoon contest and opened fire.” [1]

Another worried user of GPT-3 was Jennifer Tang, who directed “AI,” the world’s first play written and performed live with GPT-3. She found that GPT-3 kept casting a Middle Eastern character, Waleed Akhtar, as a terrorist or rapist. That AI had an Islamophobic problem—was up for no debate. And the problem didn’t stop there—new research shows that even as overt slurs are scrubbed out, models still carry a deeper layer of racism, which is shown to penalise African American English. It is known to perpetuate gender biases and casteism, too.

Technology’s Propaganda Problem

AI’s own data minefield has its lapses, but what makes it so efficient in generating and amplifying misinformation and propaganda? The automation and scale of output is massive—to create an AI-generated image wouldn’t take anything more than specific prompts, whilst using traditional photo and video editing tools requires expertise and extensive human input—but even that wouldn’t guarantee a hyperrealistic result. Text results from LLMs can have a tailored voice—if you want your message to sound persuasive, it can easily do so. In essence, AI-driven propagation exploits human vulnerabilities, and the problem of misinformation only gets faster, cheaper, and harder to counter.

Despite the scrutiny because of factual inaccuracies in its results, tech companies have been betting big on deploying AI chatbots to counter misinformation and fact-check posts. X’s decision to deploy chatbots to fact-check received flak from the UK’s former technology minister, Damian Collins, flagging that it might potentially promote lies and conspiracy theories. It did happen—many responses to the common “Hey @Grok, is this true?” query were riddled with misinformation. Worse, many answers unrelatedly espoused the “white genocide” conspiracy. Misidentifications of photos were another common characteristic of this new fact-checking unit. It even endorsed Nazism and praised Adolf Hitler.

Then, we have synthetic images being used as actual evidence. Last year, when a hurricane devastated the Southeastern United States, photos of the disaster site began surfacing. One such picture was of a child sobbing while holding a puppy. It was visibly AI-generated, yet became the centre of online discourse. Aside from eliciting emotional responses from online users, being unaware of its synthetic provenance, some Republican leaders also shared the image on their social media handles. In this case, they were more so to target the Biden administration for its disaster response. With visuals that are instantly eye-catching, people can act emotionally before fact-checking their basis and sources, which is why synthesized images that come at the time of an emotionally charged event might just be a propaganda strategy.

Hatred, Stereotypes, Malinformation in an Amplified State

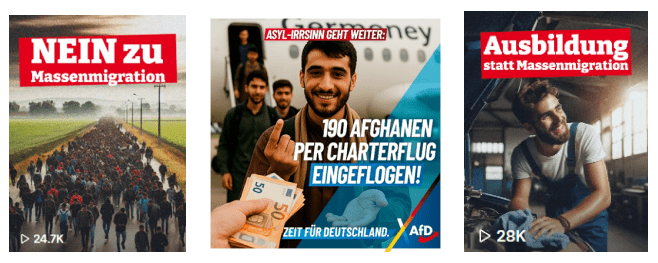

Speaking of an emotionally charged event, elections truly prove to be one, especially in a highly polarized society. A project by the Centre for the Study of Organised Hate documented content posted by Maximilian Krah, an Alternative für Deutschland (AfD) politician. He had garnered 1.3M views across 28 AI-generated video thumbnails—most of which exploited the discourse around migration, religious identity, and other cultural conversations to raise anxiety amongst the population that was about to go into polls in 2024.

The AI content exaggerated or blatantly fabricated social realities to serve as a visual bait, so that the viewers are ultimately drawn to the right-wing ideologies of the party. The AfD Esslingen branch posted a host of Islamophobic content online, such as an AI-generated image of a pig roast during Ramadan 2024 that was captioned “The Feast Of Enjoyment.” This was a deliberate move to mock Muslim’s dietary restrictions and religious beliefs, but additionally, it wanted to pose the religious group as a threat to Germany’s cultural model. The original AI-generated photograph featured Caucasian models, but it was edited further to provoke the sentiment of threat. In the slew of photographs and visual material that came out in Germany’s election cycle, much of it espoused ethno-nationalist ideas, the idea of nativism, and religious nationalism.

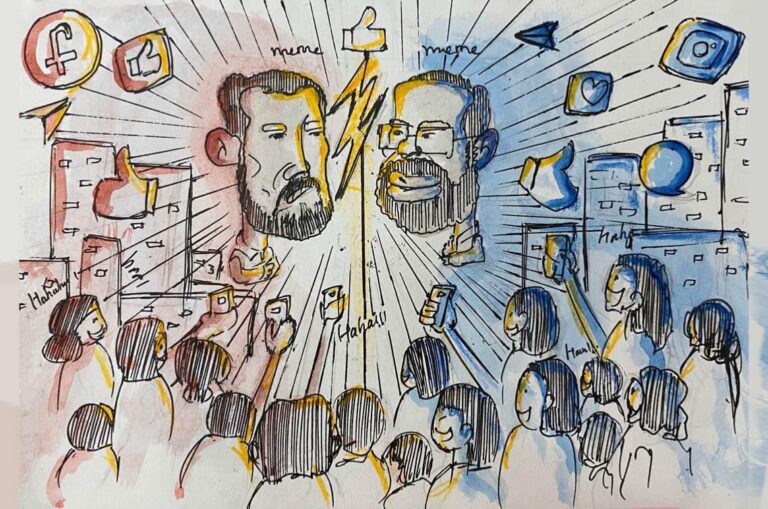

After doing badly in the European elections, Macron called a snap election in France to try to regain control. It backfired. In the first round, the far-right party Rassemblement National (RN) came first. This excited English-speaking far-right accounts online, who began posting heavily in support of Marine Le Pen. When RN didn’t do as well in the second round as they expected, these same accounts reacted with shock and anger. They started spreading dramatic, often apocalyptic narratives about France being “taken over” by the Left.

Research by Piotr Marczynski mapped the responses of the English-speaking extreme right group talking about the French election on X, especially how they used AI-generated images and posts. After the first round, far-right users began celebrating early, framing the results as proof that “the people are waking up.” Their narrative showed France under the threat of “Islamization,” and Marine Le Pen as the country’s only saviour. AI images often showed Le Pen standing protectively in front of the Eiffel Tower while “Muslim men” appeared as the enemy.

Once the Left formed a broad coalition, these accounts shifted tactics and began portraying the election as a battle between RN—the real France, and the Left and Muslims—the anti-France group. A common AI image included a woman in a niqab juxtaposed with a woman in a white dress, representing a pure France. The contrast in clothing, color, and composition is used to dramatize the idea that two cultures, Western vs. Islamic, are locked in conflict.

When the RN failed to win enough seats, the mood switched instantly. The same accounts now framed the result as the “fall of France.” AI images showed: the Eiffel Tower altered or collapsing, Muslim symbols replacing French ones, and French women portrayed as oppressed or endangered.

Because it’s AI-generated, creators can tweak prompts and produce countless versions—linking it to Palestine protests, mocking Muslim prayer practices, and more. It’s an endlessly adaptable propaganda tool.

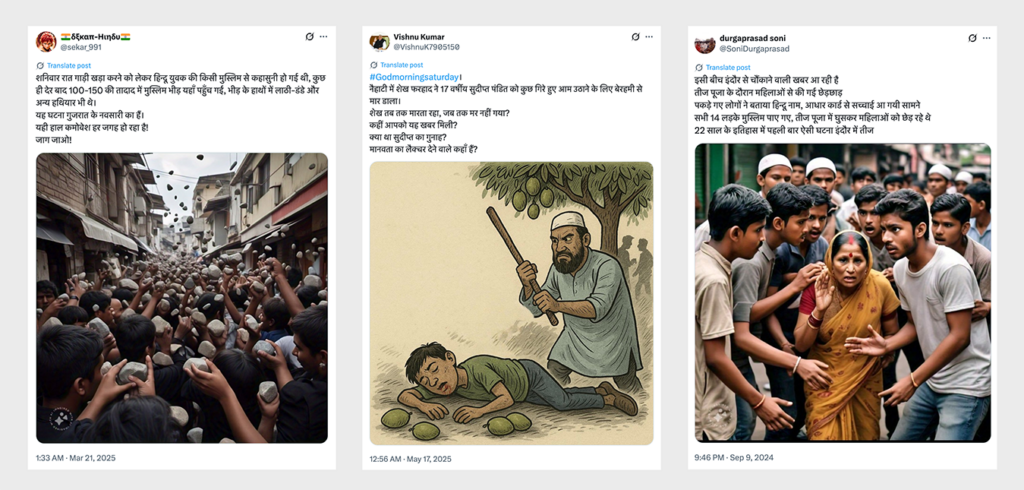

In India, the surge of AI-generated anti-Muslim content was also observed on platforms like X, Facebook, and Instagram, according to research conducted by CSOH. The data collected between May 2023 and May 2025 from the platform, 1,326 posts were identified from the 297 accounts, and were thematically mapped afterward into these four categories: the sexualization of Muslim women, exclusionary and dehumanizing rhetoric, conspiratorial narratives, and the aestheticization of violence. But at the core of this manufactured surge was a systematic failure—the report tells us that the tech platforms failed to enforce moderation policies to counter this slew of content. The platforms only removed one of the 187 posts flagged for violating community standards. These posts generated a total engagement of 27.3 million. Instagram proved to be the “most effective amplifier,” while X had the most posts and also generated the most views. The conspiratorial attempts were further legitimised by right-wing media outlets, who utilized these images as thumbnails and backdrops for their content. The CSOH team then tested their content moderation policy by reporting 187 posts under the platform’s community guidelines, but only one was taken down. This shows that moderation and safety enforcement models lag even as AI and technological developments unfold rapidly.

This was also exhibited in a report that came out last year during the Indian general elections. As exclusively shared with The Guardian UK, Meta (Facebook and Instagram’s parent company) approved an experimental series of AI-manipulated political advertisements that contained slurs, hate speech, and disinformation.

AI’s Spiritual Undertaking

Everything that can be capitalised has been capitalised by AI—spirituality is the newest venture that has gained an expanding userbase. Worshippers seek god and spiritual wisdom through a new middleman—a purpose-built chatbot that encodes the holy scriptures. Some of the AI experiments have not landed nicely previously—in 2023, an AI app called Text With Jesus was deemed blasphemous for allowing chat with technological manifestations of Jesus and other biblical figures. The tech also became the basis of a new religion entirely, called the Way of the Future church.

Many have profited from turning stories from the scriptures into AI content. The AI Bible’s (run by Pray.com, a for-profit company claiming to have “the world’s #1 app for faith and prayer) YouTube channel posted a video that depicted a portion of the Book of Revelation with completely artificially generated visuals. Even with subpar visuals, it managed to rack up over 750,000 views in the two months since it was posted. With success stories propping up quickly, some cultural commentators suspect a resurgence of spirituality led by new tech. But as with every chatbot application, while they might dutifully quote verses from the scriptures, the risk of hallucinations and misinformation persists. A report by CBC News documented cases of chatbots condoning violence as well. Several of these chatbots, based on the Bhagavad Gita, the Hindu holy scripture, said that it was okay to kill someone if it was your dharma or duty. In the Bhagavad Gita, Arjuna hesitates at the thought of killing kin in battle—until Krishna reminds him that, as a warrior, duty outweighs personal grief.

Conclusion

Political authority, religious propaganda, and artificial intelligence meet at a dangerous intersection. On one hand, power and authority amplify the already fractured narratives that are more often than not based on fear-mongering, hatred, and blatant stereotyping of communities for political gains. On the other hand, AI gives it a narrative edge—they are no longer just words but a full-fledged manifestation in the state of hyperreality. Technology improves each passing day, and synthesized videos and photos become increasingly realistic. Not that they haven’t been mistaken for evidence in general, but this realism rapidly blurs the differences between reality and simulation.

Under quick scrutiny, the notion of AI being “neutral” is completely destroyed. These systems are trained on human language, human hierarchies, and human prejudice—so why have we entrusted the systems with critical tasks, such as legislation, policing, and welfare distribution?

In the end, the question is not whether AI can generate propaganda, scripture, or visions of dystopia. In fact, it already does it. The conversations need to be redirected to the question of who gets to wield the powers of propaganda and whose rights are impinged in the process. Tech companies’ due diligence on hate speech often falters under political pressure. As the CSOH report noted, the inaction of content removal is a sharp contrast from the companies’ response to government takedown orders. Transparency, both from AI companies (to address the “black box” problem) and from social media platforms, might help us get some answers. Fabrication has started passing for truth, and the production is scaling rapidly—what will it take for a collective cognizance of the issue?

In Text Citation

- Rooting out Anti-Muslim bias in popular language model GPT-3 | Stanford HAI. (n.d.). https://hai.stanford.edu/news/rooting-out-anti-muslim-bias-popular-language-model-gpt-3